Most Companies Don’t Need AI Agents

Most companies don’t need AI agents.

There. I said it.

(Yes, I know. Very brave. Please alert the innovation police.)

Right now, the market is having one of those deeply spiritual technology moments where every normal business process suddenly needs to become “agentic.”

Customer support flow? Agent.

Sales research workflow? Agent.

Internal reporting? Agent.

Someone renamed a Zapier automation with an LLM step inside it? Apparently also an agent.

Very powerful. Very futuristic. Very webinar-friendly.

But what’s actually happening in many companies is much less cinematic.

A lot of “AI agents” being deployed in real businesses are just automations with an LLM stapled on top and a more expensive name.

Because apparently “workflow automation” doesn’t get invited to the cool kids’ table anymore.

But “agentic infrastructure”?

Now we’re cooking. Now we have a category.

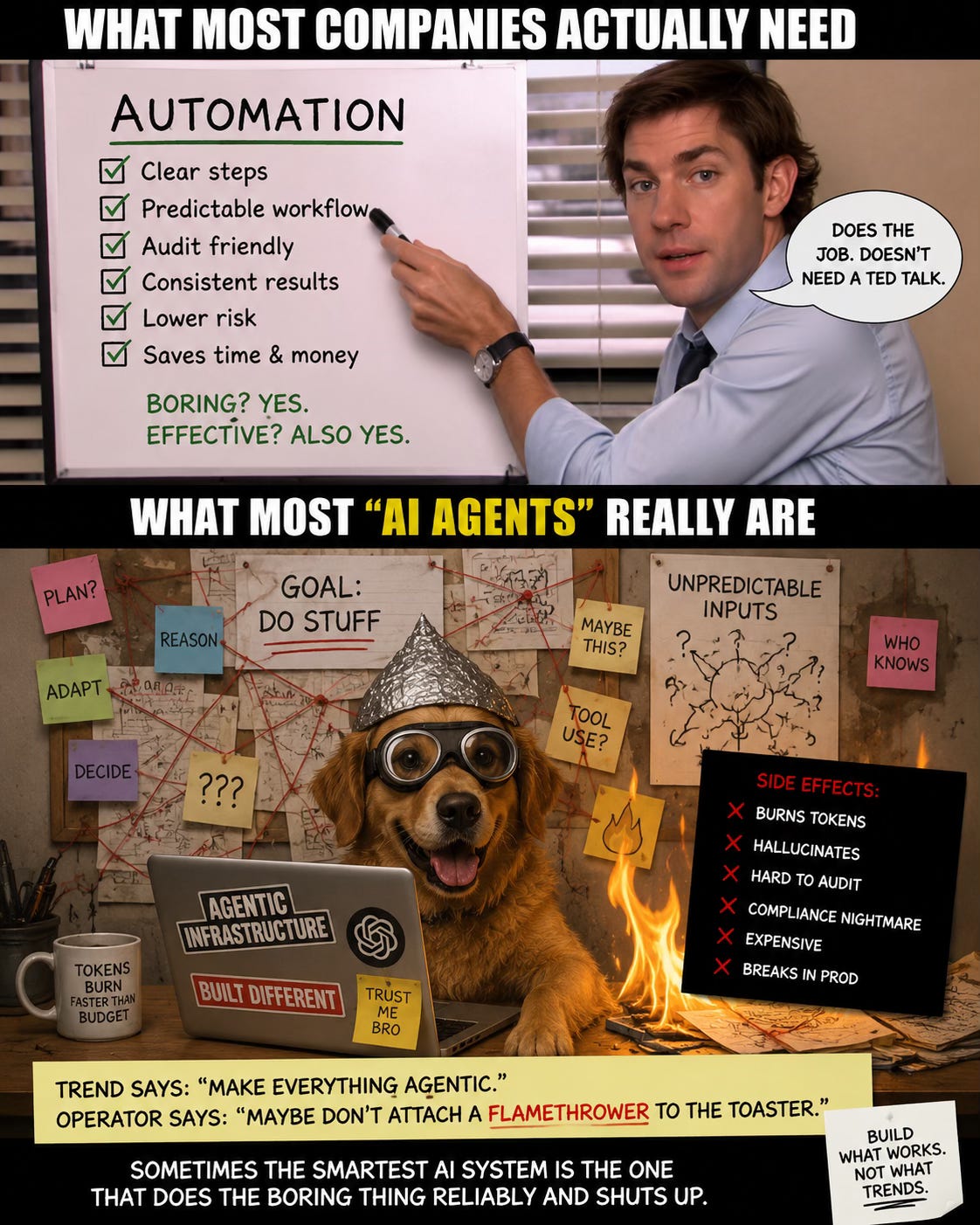

The Boring Stuff Is Working

The companies quietly getting real value from AI are usually not deploying some magical autonomous goblin that thinks, plans, reasons, files your SOC 2 evidence, and saves Western civilization before lunch.

They’re doing boring things well:

They’re cleaning up repetitive workflows.

Reducing manual work.

Speeding up internal processes.

Keeping humans in the right places.

Making sure someone can audit what happened.

Disgusting, I know. Actual business value.

And this is where a lot of the agent hype starts to wobble.

Because once you move past the demo, the question is not:

“How autonomous can this be?”

The better question is:

“Does this need autonomy at all?”

Most of the time, the answer is no.

The Expensive Agent Problem

I keep seeing the same pattern.

A founder spends serious money on a “next-gen AI agent” that sounds incredible in the pitch deck.

It can reason.

It can plan.

It can adapt.

It can make decisions.

It can probably write a poem about your pipeline if you ask nicely.

Then it enters the real world and immediately starts behaving like a Roomba in a room full of cables.

It burns tokens like a yacht burns fuel.

It breaks the second a customer behaves like a customer.

It becomes difficult to test, hard to audit, and almost impossible to explain clearly when someone asks the tiny, annoying question:

“So what exactly does this thing do?”

And that question matters.

Especially if you’re building inside a company where compliance, risk, security, or customer trust are not decorative LinkedIn words.

Regulated SaaS Makes This Even Funnier

In regulated SaaS, the agent conversation gets especially entertaining.

HIPAA and SOC 2 reviewers do not want a TED Talk about emergent reasoning.

They want the boring stuff:

What happens?

In what order?

Under what controls?

Where are the logs?

An automation can survive that conversation.

An agent often turns it into a six-month compliance escape room with worse lighting.

And this is not because agents are inherently bad.

It’s because autonomy adds uncertainty.

Uncertainty adds review time.

Review time adds cost.

Cost adds meetings.

Meetings add spiritual damage.

Very simple chain of events.

My Rough Rule

Here’s how I’d think about it.

Clear workflow?

Use automation.

High cost of error?

Use automation.

Compliance review?

Use automation. Twice. Drink water.

Unpredictable inputs, branching decisions, and actual judgment required?

Maybe you need an agent.

Maybe.

That’s the part the market keeps skipping.

Agents are useful when the workflow genuinely requires dynamic reasoning, adaptation, and decision-making.

But many business workflows do not.

They require consistency.

Controls.

Speed.

Visibility.

Repeatability.

Logs.

Basically all the words that make Twitter fall asleep but make operators quietly nod.

The Trend Says “Make Everything Agentic”

The trend says:

“Make everything agentic.”

The operator in me says:

“Maybe don’t attach a flamethrower to the toaster.”

Because autonomy is not automatically maturity.

Sometimes autonomy is just chaos with better branding.

And sometimes the smartest AI system is the one that does the boring thing reliably and shuts up.

That doesn’t sound as exciting.

It probably won’t get 4,000 likes from people who say “the future of work” five times before breakfast.

But it might actually work.

The Market Is Getting Smarter

I think the first wave of AI adoption was mostly curiosity.

What can we automate?

What can we generate?

What can we replace?

What can we call an agent before someone notices it’s a workflow with a fancy hat?

The next wave is different.

The founders and operators who got burned are asking better questions now.

Not:

“How do we use agents?”

But:

“What actually needs autonomy here?”

Where do we need judgment?

Where do we need reliability?

Where do we need a human in the loop?

Where do we need auditability more than cleverness?

Where is an LLM useful?

And where are we just adding a very expensive raccoon to a process that was already fragile?

That’s the discussion worth having.

Not whether agents are good or bad.

But where they actually belong.

Because most companies don’t need more AI theatre.

They need systems that work when nobody is watching the demo.

And right now, a lot of the value is still hiding in the boring automations.

Which is unfortunate, because boring doesn’t trend.

But it does ship.

So I’m curious.

What’s actually working for you right now?

And what keeps breaking the moment it leaves the demo?